Conversational AI is the next iteration of human to computer interaction – one that could replace the mouse and keyboard and be an essential advancement for people to effectively communicate with next generation machines. But achieving human-level language capabilities in speech-to-text systems, automated customer support, industrial machinery and robots, or personal digital assistants, is not easy. Recent developments, however, bode well for the future evolution and proliferation of conversational AI.

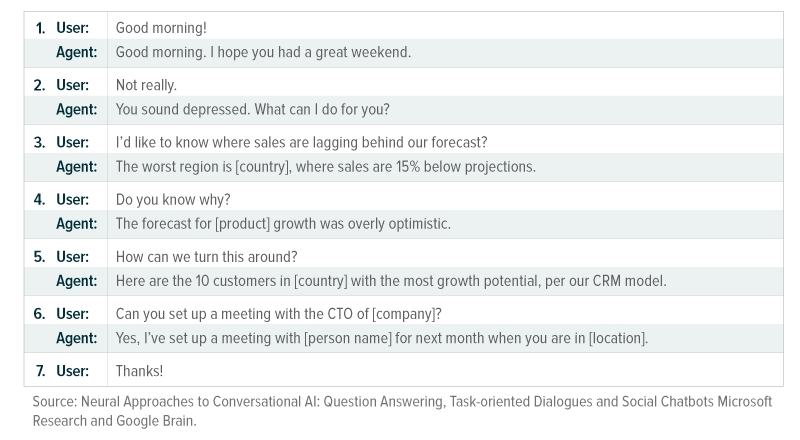

While this may be a pedestrian conversation among two human employees, the conversation above subtly depicts the complexities in everyday speech that must be decoded by the world’s most advanced AI systems. In this dialogue between a human user and an AI agent, the conversation evolves dramatically. Turns 1 and 2 are a social chat segment. Turns 3–5 are a Q&A segment. Turns 6 and 7 are a task-completion segment.1 Beyond that, the conversation is laced with nuance, context, and social norms that must be understood and reciprocated by the chatbot.

We base what follows on the progress towards solving these challenges, providing insights into the latest advances in conversational AI and its vast potential.

The challenge: “Sorry, I didn’t quite get that”

Conversations between humans are extremely complex. Not only must the participants understand the words being spoken in real time, but also, they must intuit the context, intonation, and non-verbal cues that can change the meaning of what’s being said. For example, an enthusiastic “GOOD MORNING” on a Friday can mean “please ask me about my weekend plans”, whereas a mumbled “g’mornin” on a Monday can signal “don’t bother me right now” without changing the words.

Conversational AI is the development of software programs that allow a computer to understand what a human intends to say or ask for, decipher its meaning, and communicate relevant responses. Given the complexities of human interactions, AI algorithms depend on language models that are massive in scope and complexity and backed by substantial computing power.

Personal assistants like Apple’s Siri and Amazon’s Alexa have introduced to the broader consumer population the idea of a two-way verbal interaction with a machine. While the technology Siri and Alexa uses is constantly improving capabilities, they still exist solely in the task-completion segment – with limited functionality. They can read the news, play a song, set an alarm clock, or search for basic terms on the internet. Alexa is said to have developed 63,000 skills – a number that is increasing by roughly 36 per day.2 But venture outside of these predefined tasks, and the voice assistants struggle to make sense of the conversation.

Leveraging massive computing power for Natural Language Processing

As conversational AI advances, it could drastically change the way we input commands into a computer, challenging the keyboard and point-and-click. In fact, the average person speaks somewhere between 120 and 150 words per minute, but only types between 38 and 40 words.3,4 If machines can understand and reach a conclusion based on speech, the speed of information flow could grow significantly.

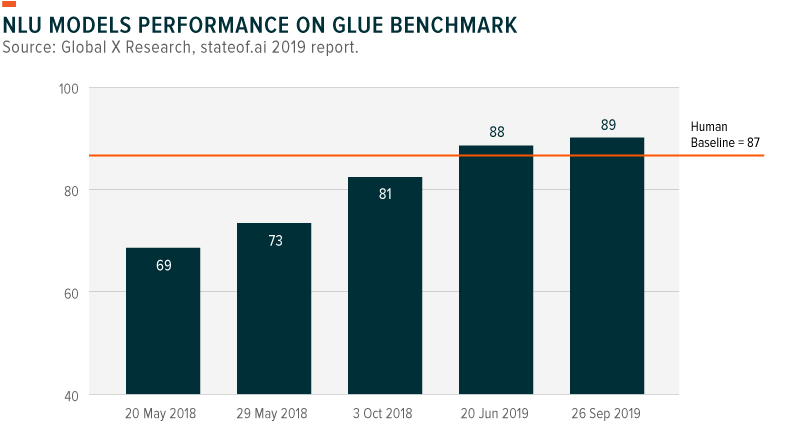

Natural language understanding (NLU) is one branch of AI that leverages computing power to understand language inputs, either speech, text, or a combination of both. For NLU technology to be maximally useful, it must be able to process language in a way that is not exclusive to a single task, genre, or dataset.5 The General Language Understanding Evaluation (GLUE) benchmark measures and scores the performance of NLU models across a variety of tasks, and just recently state-of-the-art models have surpassed human baseline set by the benchmark.

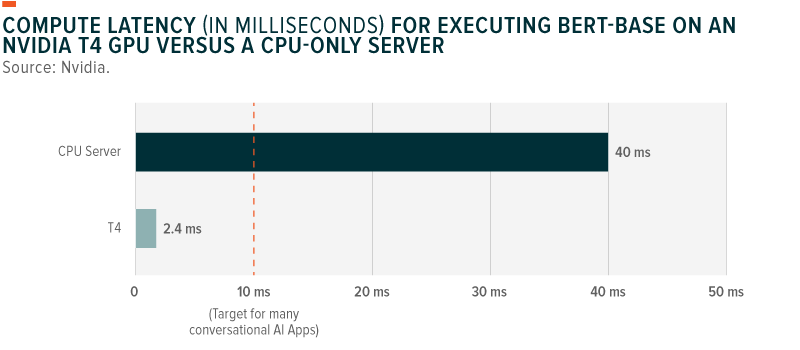

Today, enhanced computing power through the use of graphic processing units (GPUs) makes natural language processing (NLP) possible. Recently, leading GPU maker Nvidia announced that its AI platform achieved a rather sizable breakthrough: they successfully trained one of the world’s most advanced NLP/AI language models – Bidirectional Encoder Representations from Transformers (BERT) – in a record-breaking 53 minutes with language models now completing inferences – i.e., understand and reach a conclusion based on information received – in just 2.2 milliseconds. 6,7 For perspective, the typical training time for BERT used to be several days and inference had a 10-millisecond processing threshold.8 Performance improvements such as these are improving speed and accuracy, setting the stage for real-world applications.9

Faster training and inference is expected to increase the rate at which these chatbot systems are deployed in commercial applications and to consumers.10 The number of services interactions handled by NLP/AI technologies is expected to grow to 15% by 2021, a 4X increase from 2017.11 There were approximately 2.5 billion digital voice assistants in use in 2018, a number that could grow more than three times to 8 billion by 2023.12

The future of conversational AI

Conversational AI has moved from its experimental phase to its application phase. Possible applications cut across industries and go well beyond smart speakers and chatbots. Conversational AI is expected to be omnichannel, multi-device, and multi-language, potentially disrupting the nearly $86 billion global outsourced services market by leveraging chatbots, messaging apps, digital/personal assistants, and voice search.13

By sector, examples of potential use cases include:

- Healthcare: Conversational AI could help increase telemedicine’s effectiveness with better evaluation, diagnostic and clinical services to patients on a 24/7 basis, all via phone. Currently, telemedicine utilization rates remain below 10% in the U.S and are currently based on human interactions, but as conversational AI continues to evolve, such solutions could offer personalized suggestions and assist patients to asses a condition.14 Conversational AI assistants are expected to help with elderly care as well, including treatment reminders and understanding. Mental health is another segment conversational AI could make inroads.

- Transportation: Conversational AI will play a critical role in assisting passengers in autonomous vehicles, a technology that auto manufacturers expect to launch initially in a geo-fenced environment in the next 2-3 years. Conversational AI will help passengers select destinations and preferred routes, check charge levels, and manage infotainment options.

- Customer Service: Conversational AI could be set to disrupt the call center industry, cutting across retail, travel and hospitality, insurance and life science segments, among others. Approximately 3 million people work in call centers in the U.S.15 Yet many companies outsource these services to lower cost countries, significantly increasing the number of people working in call centers globally. Conversational AI could also provide the ability to expand global reach with support for multiple languages.

Conclusion

We believe we are at an inflection point where conversational technologies have the power to evolve and transform industries. Recent progress has made conversational AI useful to millions of users, ushering in the next generation of user interface with machines. As the technology continues to evolve, we expect opportunities in the space to grow alongside the many applications that begin to leverage the functionality.

Related ETFs

BOTZ: The Global X Robotics & Artificial Intelligence ETF (BOTZ) seeks to invest in companies that potentially stand to benefit from increased adoption and utilization of robotics and artificial intelligence (AI), including those involved with industrial robotics and automation, non-industrial robots, and autonomous vehicles.

AIQ: The Global X Future Analytics Tech ETF (AIQ) seeks to invest in companies that potentially stand to benefit from the further development and utilization of artificial intelligence (AI) technology in their products and services, as well as in companies that provide hardware facilitating the use of AI for the analysis of big data.

Pedro Palandrani

Pedro Palandrani